Introduction to Artificial Intelligence

Artificial intelligence concept art (Wenjie Dong, iStockphoto)

Artificial intelligence concept art (Wenjie Dong, iStockphoto)

How does this align with my curriculum?

| Grade | Course | Topic |

|---|

Learn about the fast-moving field of artificial intelligence and what it can mean for students.

What is Artificial Intelligence?

To understand artificial intelligence, or AI, you need to understand what intelligence means. You might think that it means being smart. But intelligence is far more than doing well on tests.

Intelligence is complicated. Scientists who study intelligence still have many questions to answer. Are animals intelligent? Do you inherit intelligence from your parents? Does the size of your brain have anything to do with intelligence?

Scientists don't agree on what intelligence means. And they can’t agree on what artificial intelligence means either. Some education organizations have created their own definitions though. AI4All describes AI as “a branch of computer science that allows computers to make predictions and decisions to solve problems.”

This backgrounder will help you understand more about AI.

General and Narrow Artificial Intelligence

There are two main kinds of artificial intelligence - general and narrow. It is important to understand the difference between them.

Artificial General Intelligence

The goal of Artificial general intelligence (AGI), or general AI, is to be equal to human intelligence. Many robotic engineers have managed to create human-like robots, but they don’t have intelligence equal to humans yet. And experts don’t think it will exist any time soon.

General AI is what you usually see in books and movies that take place in a dystopian future. Some examples of this are the Terminator and the Matrix movies. You might think this is a pretty new idea. But artists have been imagining the possible uses - and misuses - of AI for a long time.

Mary Shelley was one of the first authors to imagine what might happen if you gave a non-living thing intelligence. Her novel, Frankenstein, was published in 1818. Some people who study the novel call Dr. Frankenstein’s creature superintelligent. Superintelligent is a word to describe AI that is more advanced than the smartest humans.

Some scientists try to understand human intelligence so they can help develop general AI. They use digital models to copy human behaviours. They try to understand things like how we learn, or how creativity works. Recently, scientists who study the human brain and scientists who study AI have made progress by getting ideas from each other.

Artificial Narrow Intelligence

Artificial narrow intelligence (ANI) is AI we can experience right now. Sometimes this is also called weak AI. This type of AI means machines can take actions and make decisions automatically in certain situations. Self-driving cars, virtual assistants and personalized audio and video recommendations are examples of narrow AI.

Did you know?

In AI, the word “machine” can mean anything from the software used online or in a mobile app, to a humanoid robot. There aren’t many humanoid robots, but they sure are impressive!

A Brief History of Artificial intelligence

John McCarthy and Marvin Minsky first used the phrase “artificial intelligence” in 1955. Then they thought that general AI would be possible in a few years. But the technology needed to research AI was very expensive. AI research has sped up recently because scientists have been able to use machine learning. Machine learning is much faster than computer programming by humans.

Machine Learning

Machine learning (ML) is a smaller part of AI. It includes various tools and methods that allow machines to learn without humans programming them. Humans input large amounts of data into an ML system. Then they give the system a desired output. The ML system tries to find the relationship between the two. The machine then produces a specific algorithm from the given output and input.

For example, social media platforms use recommendation systems. These systems choose what content to show you. The input data includes your browsing history, your likes and your friends’ preferences. It also includes your personal information like age and gender. The desired output is the time you spend on that platform. The longer you spend there, the more money a social media company can make by advertising to you. So the machine’s job is to find out what content will make you stay.

Did you know?

An algorithm is a series of ordered, logical and clear rules or instructions needed to solve a problem or achieve a goal.

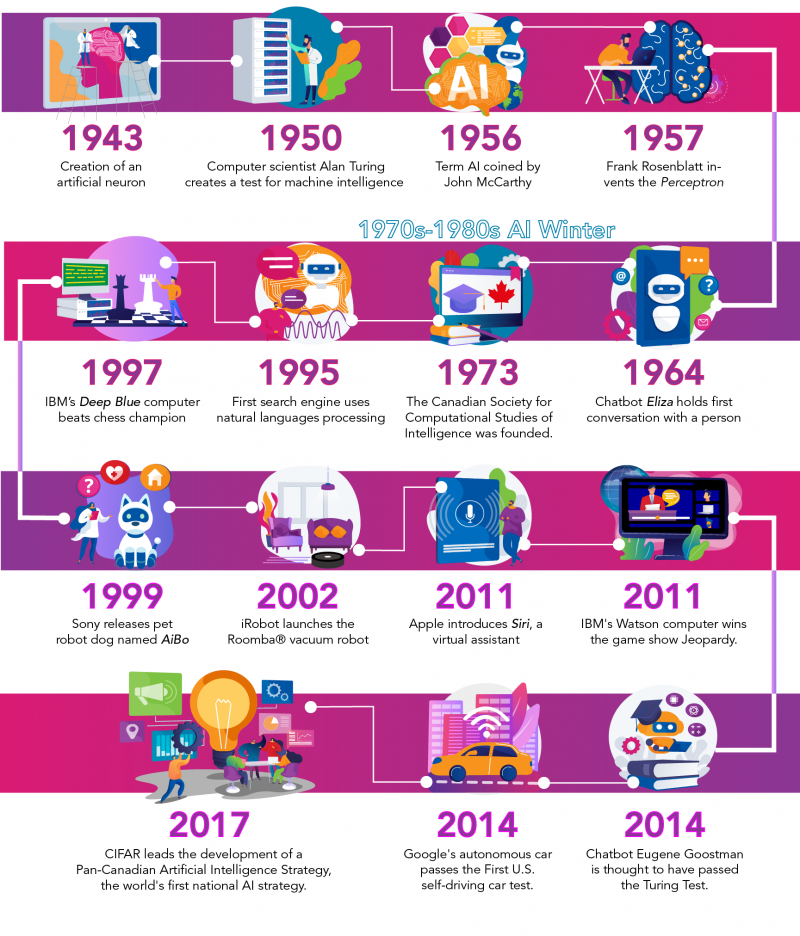

Here are just a few of the major milestones in artificial intelligence:

| 1943 | Warren S. McCulloch and Walter Pitts create a model for an artificial neuron based on human neurons. |

| 1950 | Computer scientist Alan Turing creates a test for machine intelligence. He called it “the imitation game.” It is now known as the “Turing Test.” |

| 1956 | The phrase Artificial Intelligence was first published by computer scientists John McCarthy and Marvin Minsky. |

| 1957 | Frank Rosenblatt invents the Perceptron. It is the first computer neural network based on the human brain. |

| 1964 | The first chatbot, Eliza, holds conversations with humans. |

| 1970s-1980s | Pessimism about machine learning and a lack of funding leads to the 'AI Winter'. |

| 1973 | The Canadian Society for Computational Studies of Intelligence is founded by researchers across the country. This is now called the Canadian Artificial Intelligence Association. It is located at Western University. |

| 1995 | AltaVista is the first search engine to use natural language processing. |

| 1997 | IBM's Deep Blue is the first computer to beat a chess champion. It defeats Russian grandmaster Garry Kasparov. |

| 1999 | Sony launches a robot pet dog named AiBO. It’s skills and personality develop over time. |

| 2002 | iRobot launches the Roomba® floor-vacuuming robot. |

| 2011 | Apple integrates Siri, an intelligent virtual assistant, into the iPhone 4S. |

| 2011 | IBM's Watson computer wins at the TV game show Jeopardy. This proved it could understand complex human language, because questions included word play. |

| 2014 | Some experts think computer "chatbot" Eugene Goostman has passed the Turing Test. |

| 2014 | Google's self-driving car passes the Nevada state self-driving car test. |

| 2017 | CIFAR leads the development of a Pan-Canadian Artificial Intelligence Strategy, the world's first national AI strategy. |

Did you know?

The HAL 9000 computer in the movie 2001: A Space Odyssey was based on experts’ opinions about artificial intelligence in 1968.

AI Begins With Math

Data has a story to tell. If you know how to look for it! In the past, people have looked for patterns and trends in data. When there is a lot of data, this can be difficult. But computers can speed up the process.

Computers can do the calculations needed to make mathematical models. They can also create visualizations of data. Visualizations are different ways to represent data, like charts and graphs. People can see patterns and trends more easily in visualizations.

Why do we want to find patterns and trends? One reason is to make predictions. Predictive models help humans make decisions in areas like medicine and the environment.

So how much data is out there? Short answer - a lot! People collect a lot of data because of the internet and other communication tools. This is often called “Big Data.” Scientists have had to invent bigger and bigger computing systems to process all this data. Cloud computing is a good example. It was developed because regular computers could not handle the amount of data they received. Machine learning systems were also needed to help us learn from the data.

All this data means that the relationship between humans and computers has changed. In the past, humans used computers to help organize and represent data. But humans had to make sense of it. Now, machines are figuring out how to understand and explain vast amounts of data. And humans are helping them. Computers can’t do everything themselves. There are many skills they don’t have, like thinking ahead and combining different ideas.

Optimizing the Human-Computer Relationship

“Human in the loop” machine learning is a way to combine machine and human strengths. A Computer's strength is its computing speed. Humans will never be able to compute as fast as computers. Human strengths include creativity and critical thinking. Humans have the ability to look at a problem from different points of view.

This means humans have many important roles besides building AI tools. For example, a human engineer can participate in testing an airplane’s safety mechanism. Human resources experts can study an AI hiring tool to look for possible biases. And medical experts can help make sure an AI tool is accurate for all patients.

Did you know?

Bias can happen when data used in AI does not reflect reality. For example, a set of medical data needs to represent the characteristics of a population. These include things like age, gender and ethnicity. If it does not, then this data can’t be used to make predictions for the whole population.

Artificial Intelligence Opportunities

AI is a great tool. It can help us find solutions to many problems. This is true in any area where we use data, which is almost everywhere! These are just a few of the ways AI can help us reach some of the United Nations Sustainable Development Goals (SDGs).

SDG 3 - Good Health and Well-being

Some of the most successful ML and AI applications are in the field of medicine. AI and machine learning are being used in research, like fighting cancer or searching for vaccines. Some doctors use AI diagnostic tools to help identify illnesses faster. They can also use AI tools to take notes, analyze their discussions with patients, and enter information directly into medical record systems.

AI can help improve human health in many other areas too. It can analyse social media posts for signs of mental illness. It can also reduce injuries on construction sites, and much more!

SDG 1 - No poverty and SDG 2 - No Hunger

AI tools can be used by governments to find ways to reduce poverty and hunger. For example, they can help farmers detect pests, diseases and poor nutrition in plants. Or they can help to reduce food waste. AI can also be used to help predict and respond to natural disasters, or find out where poverty is happening using satellite images.

SDG 13 Climate Action, SDG 14 Life Below Water and SDG 15 Life on Land

AI can provide much-needed tools to help us protect the environment and fight climate change. AI can use satellite images and data analysis to help scientists identify trends and work on solutions. For example, AI can be used to identify where forest fires could happen, before they start. It can also be used to understand and protect endangered species. Scientists can even combine robotics and AI visual recognition systems to create a robot to help protect ecosystems from invasive species.

Did you know?

The Association for Computing Machinery A.M. Turing Award is sometimes called “the Nobel prize of computer science”. In 2018, the award went to three Canadian researchers. Their innovative work on neural networks will help computers learn even faster! Soon after their win, the Canadian government helped fund new hubs for AI development in Quebec, Ontario and Alberta.

Artificial Intelligence Concerns

Should we be afraid that artificial intelligence might develop beyond human intelligence? Or that it could become a threat to humans? Experts have been debating these issues for a long time. Some people only see AI’s potential for innovation and problem-solving. Some people, like Elon Musk and Bill Gates, think that AI could be dangerous. They think humans should be very careful about how they develop and use it. That’s why scientists and governments are working together to create regulations for AI. These rules will make sure people develop AI applications with human needs and well-being in mind.

Superintelligence

Some scientists are concerned about the speed of AI development. Computers are now so fast that superintelligence is a real possibility. Superintelligence is the idea that machine “brains” could someday be better than human brains in general intelligence.

“Humans, limited by slow biological evolution, couldn’t compete and would be superseded by A.I.”

But many other scientists think Artificial General Intelligence will not be developed any time soon. They also think there are many other problems we need to think about first.

Job Displacement

AI is already changing the job market. Robots controlled by AI are doing many things that humans used to do. People are worried that they will be replaced by machines.

During the industrial revolution, people worried about the same thing. New factories made many manual jobs obsolete. But factories also created millions of new jobs.

Some experts predict that AI will make some jobs obsolete, but it will also create more jobs. Some of them call this the fourth industrial revolution. This change will affect jobs that involve manual labour. But it will also affect office jobs where people make routine decisions. These tasks can be automated with AI systems.

Ethical considerations

The number of new artificial intelligence technologies has exploded in recent years. AI can offer many advantages, but it can also create problems. Because AI is developing so quickly, many problems are only discovered when a technology is already in use. Also, many governments have not developed regulations for AI.

This is why the United Nations Educational, Scientific and Cultural Organization (UNESCO) started AI: Towards a Humanistic Approach. The goal of this project is to look at the many ethical concerns in AI and help governments make sure AI serves their people in a fair and just way.

Equity, Diversity and Inclusion

When AI is applied to a human population, equity, diversity and inclusion (EDI) issues can arise. If the dataset used does not represent the entire population, AI tools can produce questionable results.

For example, police organizations in the United States use AI facial recognition tools. But this leads to many people from visible minorities being wrongfully arrested. This is because AI systems are not good at identifying people who do not have white skin.

There are also EDI issues to think about when we consider the opportunities created by AI. Governments need to make sure all of their citizens have equal access to job opportunities. One way to do this is to help all students learn about AI in school. Also, AI already plays a role in our daily lives. So, it’s important for all students to learn about it so they can become informed citizens.

Transparency

It is very important to understand how AI systems work. This is called AI explainability. Explainability is about being able to understand how an AI system gets its results. The firms that create AI systems want to protect their intellectual property. And sometimes AI algorithms are so complex that it’s almost impossible to understand the processes involved. This is a problem when people want to understand the decision-making process, or figure out how biases arise. This is called the black box effect. Scientists around the world are studying it to find solutions.

Experts also worry about who controls the research efforts, funding and discoveries in AI. Right now, a few private companies are responsible for most AI research and progress. Some people think all this power should be distributed among more organizations.

Liability

Liability is the legal responsibility of a person or organization in a conflict. But what happens when AI is involved? If a self-driving car is in an accident, who is responsible? The driver, the company that developed the software, or the AI engineer?

In conclusion...

The field of AI is especially important for young people. Its impact on their lives will only increase. But becoming more familiar with AI does not have to mean taking computer science classes. You can learn about AI in many different ways.

To make sure Canadian youth are prepared for a future with AI, they are encouraged to:

- develop awareness of its presence and recognize when it is used

- understand the potential of AI so they can use it to solve problems

- understand the limitations and ethical implications of AI so they can make sure that future developments integrate these considerations into design and monitoring

- reflect on the impact AI development could have on their own agency and think about how it could affect their education paths and career choices

- become informed citizens who can be involved in the responsible use of AI in many fields

We may not know exactly what the future holds, but we can be pretty sure that AI will play an important role. And many scientists in Canada are working hard to figure out how humans and machines can build a better world together. Will you join them?

Learn More

What The Kids’ Game “Telephone” Taught Microsoft About Biased AI (2017)

This article by Fast Company illustrates the five types of bias that can be found in machine learning applications.

Myths and Facts About Superintelligent AI (2017)

This video (4:13 min.) from minutephysics discusses common misconceptions and true facts about superintelligence.

AI Can Outperform Doctors. So Why Don’t Patients Trust It?

This video (3:28 min.) by The Medical Futurist discusses why AI applications are not trusted by patients yet.

The Rise of the Machines – Why Automation is Different this Time (2017)

This animated video (11:40 min.) by Kurzgesagt – In a Nutshell explains how automation is shaping the present and how it will also shape the future.

The Turing test: Can a computer pass for a human? (2016)

This video (4:42 min.) by TED-Ed explains how the Turing Test was used to evaluate if computers have human-equivalent intelligence.

Frontline - In the Age of AI (2019)

This video documentary (1:56:07 min.) by PBS presents the opportunities and challenges of AI technologies, current and future.

How AI and Machine Learning Work (2019)

A series of 3 videos from Code.org on AI and machine learning.

How Snapchat's filters work (2016)

This video (5:07 min.) by Vox explains how visual recognition works, including Snapchat's filters.

Do you know AI or AI knows you better? Thinking Ethics of AI

This video (18:38 min.) from UNESCO discusses the ethical dilemmas that societies need to consider in regards to AI applications.

References

Chui, M., Chung, R. and van Heteren A. (2019, January 21) Using AI to help achieve Sustainable Development Goals. United Nations Development Program.

Granville, V. (2017, January 2) Difference between Machine Learning, Data Science, AI, Deep Learning, and Statistics. Data Science Central.

Maini, V. (2017, August 19) Machine Learning for Humans. Medium.com

Stark, L. and Zenon W. Pylyshyn. (2020, March 10). Artificial Intelligence (AI) in Canada. The Canadian Encyclopedia.

Timelines Wiki. Timeline of machine learning. (2020).

Wingate, J. (2018, April 30) Why Artificial Intelligence Will Make You Question Everything.